Business Situation and Requirements

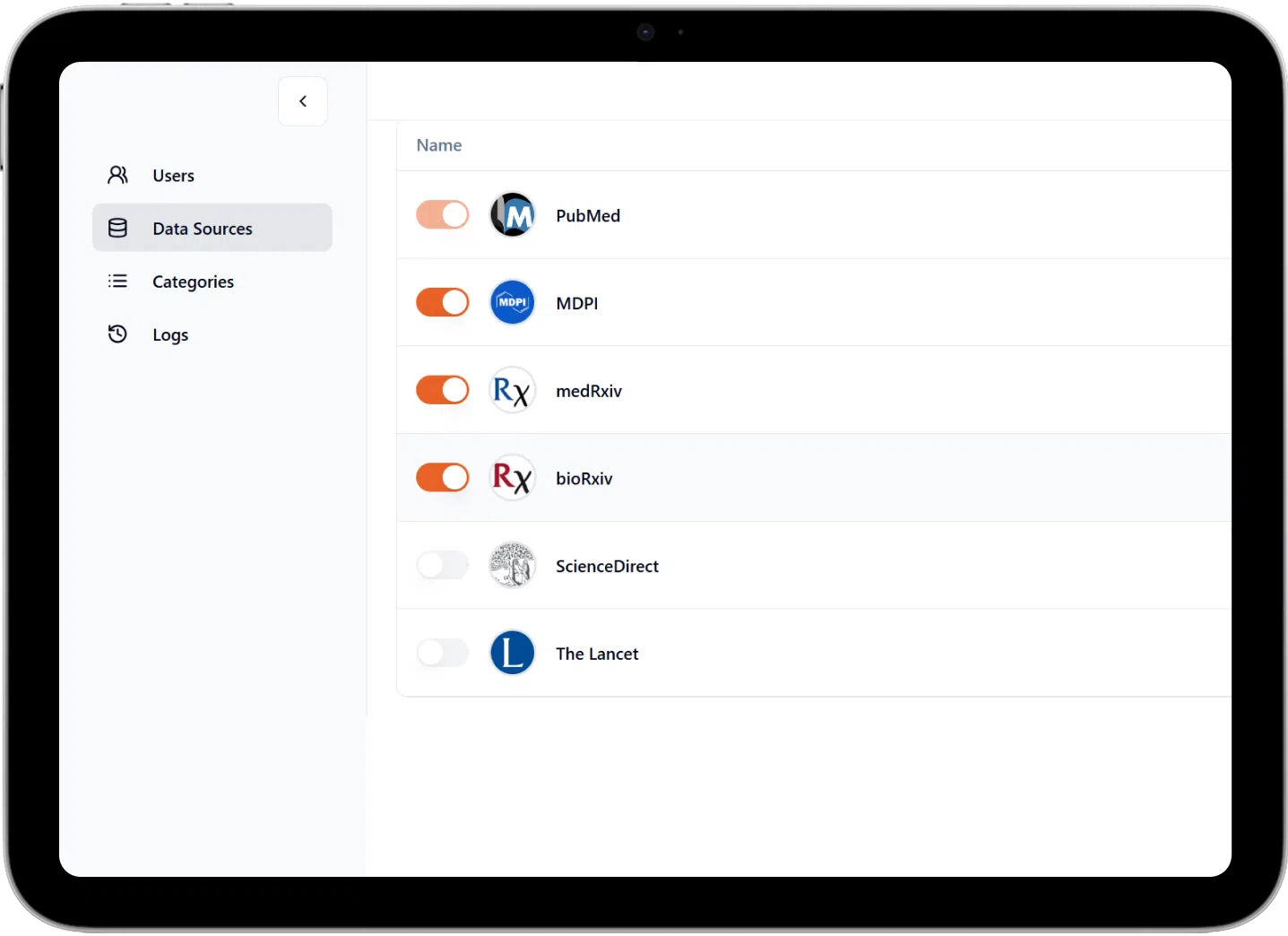

Life sciences researchers use platforms like PubMed, PubMed Central, ScienceDirect, MDPI, bioRxiv, and arXiv for pharmaceutical research updates. First, they run broad searches, then screen results for relevance and accuracy. However, many studies appear on multiple platforms, creating duplicates that must be manually removed. Additionally, large result sets need refinement, and database limits often split searches. Consequently, the process is repetitive and time-consuming.

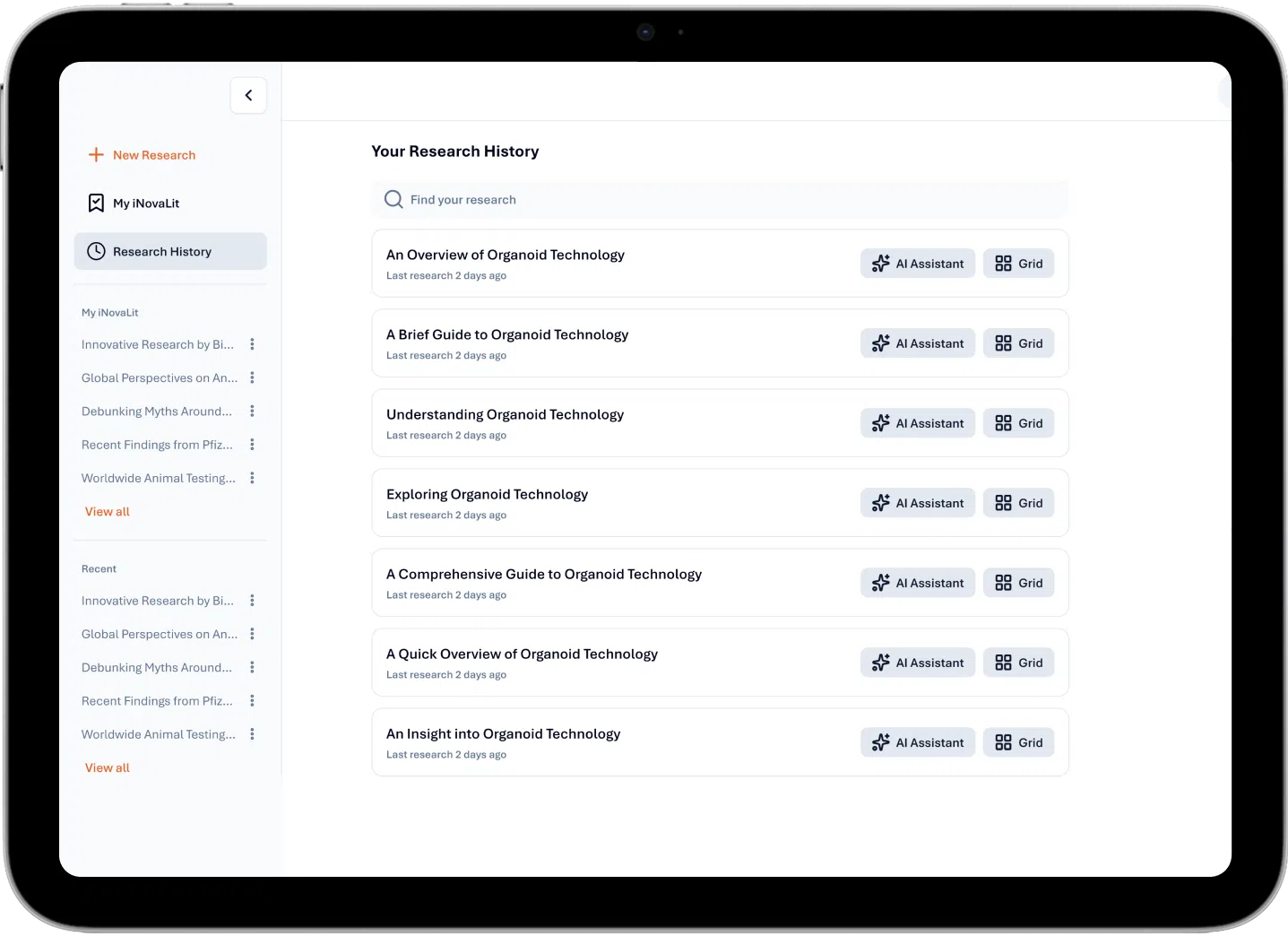

As a result, recurring briefs and newsletters can take up to 30 hours. To improve efficiency, the client partnered with Unthinkable to build an AI-powered assistant that streamlines aggregation, validation, and report generation in one interface, while researchers retain full review control.

Their key requirements included:

Create a unified platform that pulls data from multiple databases and supports both natural language and structured searches.

Automatically detect and remove duplicates using DOI and publication metadata.

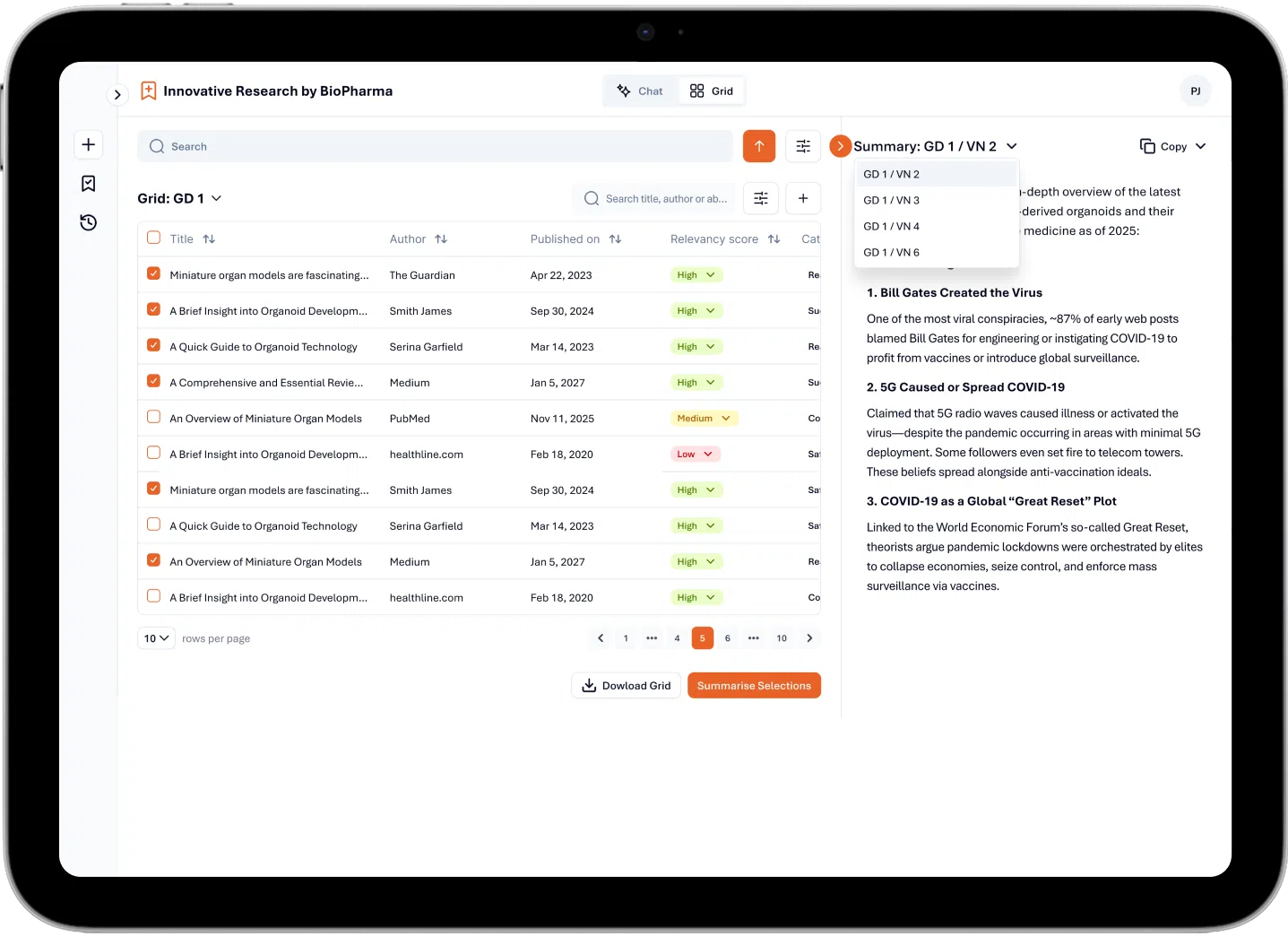

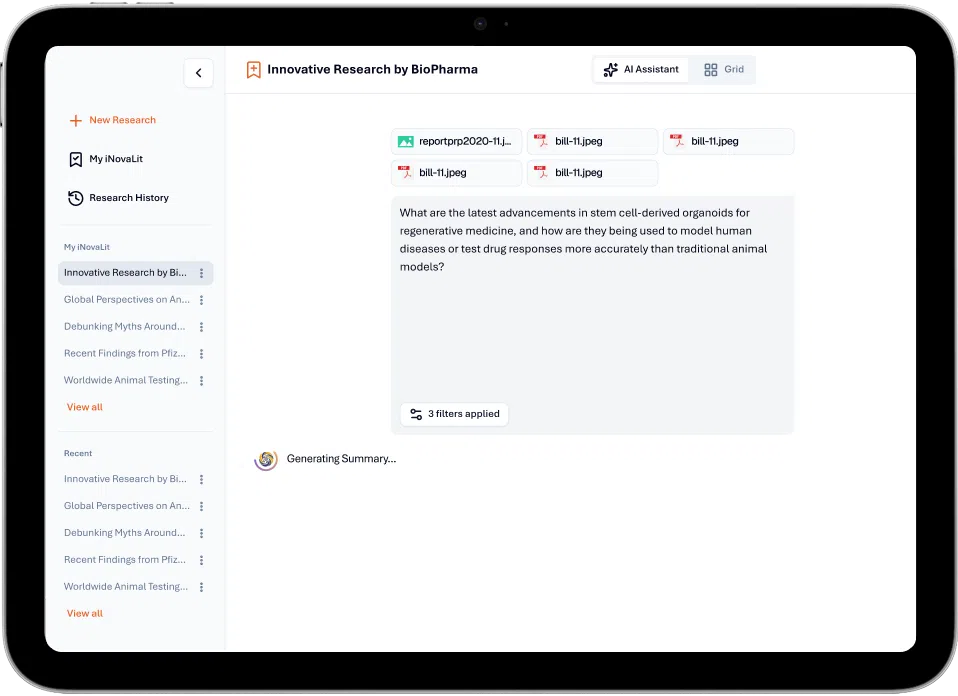

Use AI to validate and score relevance, while letting researchers review and adjust selections.

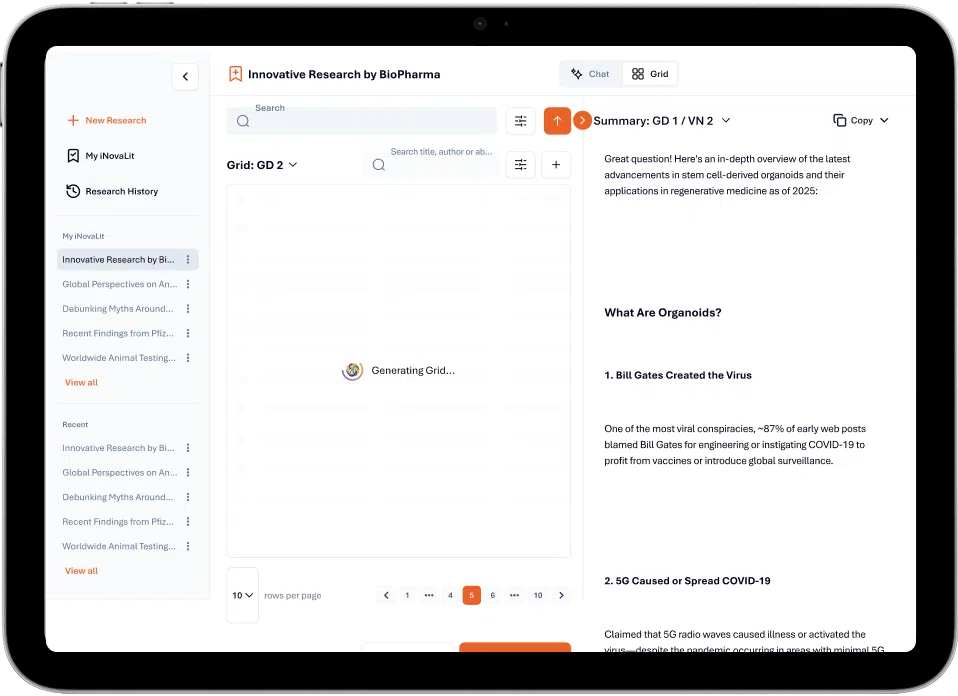

Automate repetitive tasks like filtering, categorizing, summarizing, and refining large result sets.

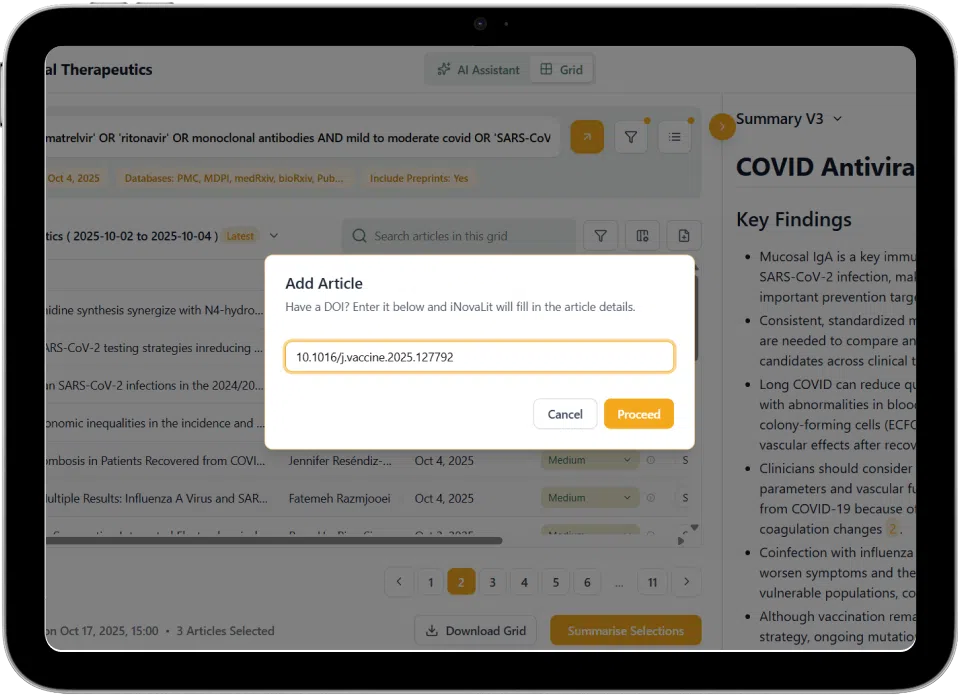

Give researchers control to update metadata, manage article status, and add records via DOI.

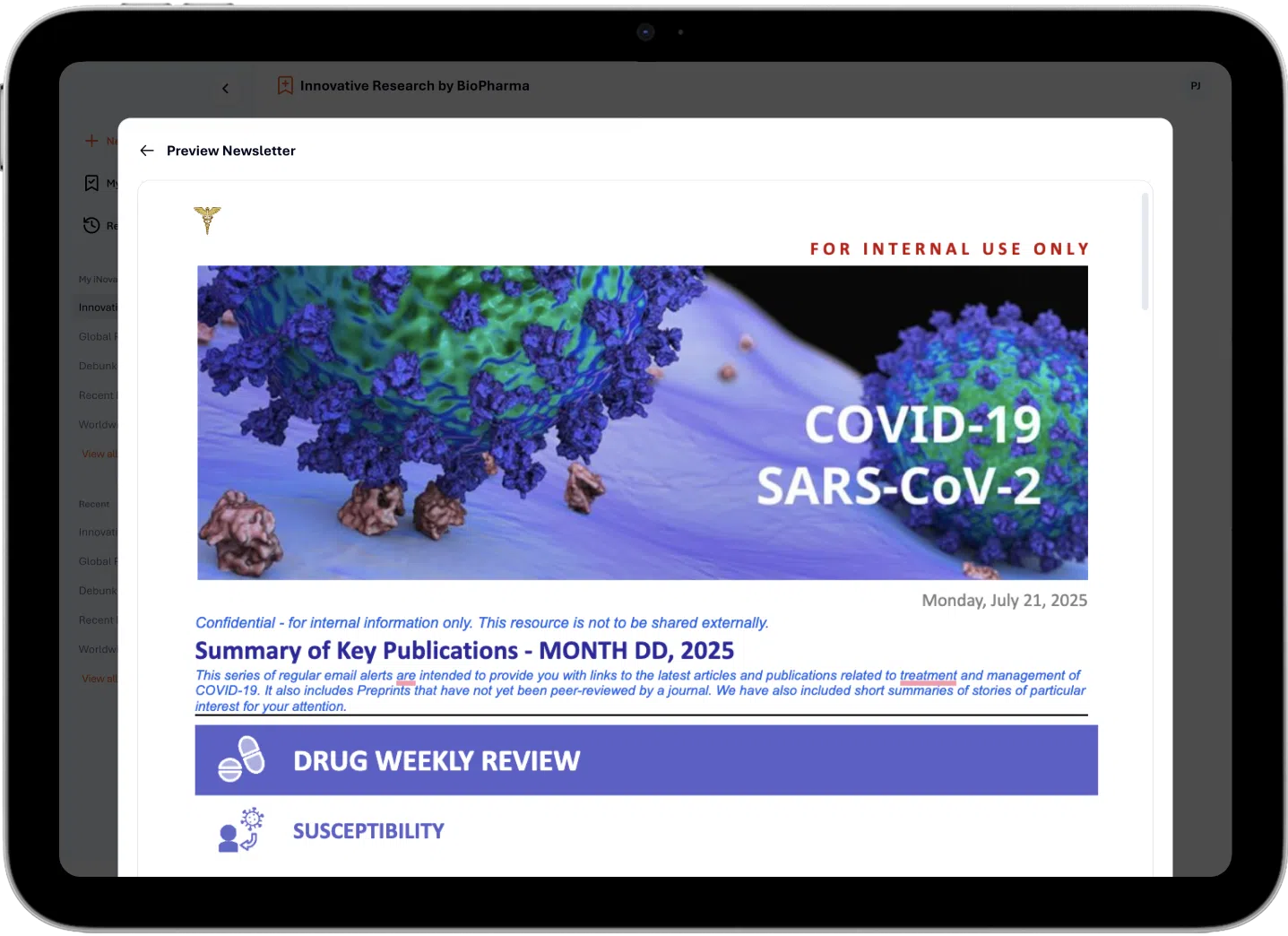

Produce structured outputs, PDFs, reference lists, and newsletters, while tracking updates across reporting cycles.