Mar 31, 2026

7 Pillars of Architecture-Led Software Products

This is Part 5 of the Engineering Growth Series. We’ve covered the $20M Architecture Wall, why architecture is the new marketing, why fast teams slow themselves down, and why most roadmaps are borrowed. Each of those pointed to the same underlying truth: the system resists change long before you realize it needs to. This article is about what it looks like when a system is designed to do the opposite.

A Growth Problem That Wasn’t About Growth

The D2C brand we started working with wasn’t broken. It was just not designed to scale.

Campaigns were live. Orders were coming in. The team was working hard. Growth wasn’t flat.

But it wasn’t compounding.

CAC wasn’t improving. Retention was inconsistent. Every new experiment — a new offer, a new journey, a new funnel variant — needed engineering involvement before it could go live. And engineering was already stretched.

On the surface, it looked like a marketing problem. Maybe targeting. Maybe creatives. Maybe the wrong channels.

It wasn’t.

The marketing team was capable. The campaigns were reasonably well-structured. The problem was that the system underneath them wasn’t designed to learn. It was designed to execute.

And there’s a significant difference.

What We Found When We Dug In

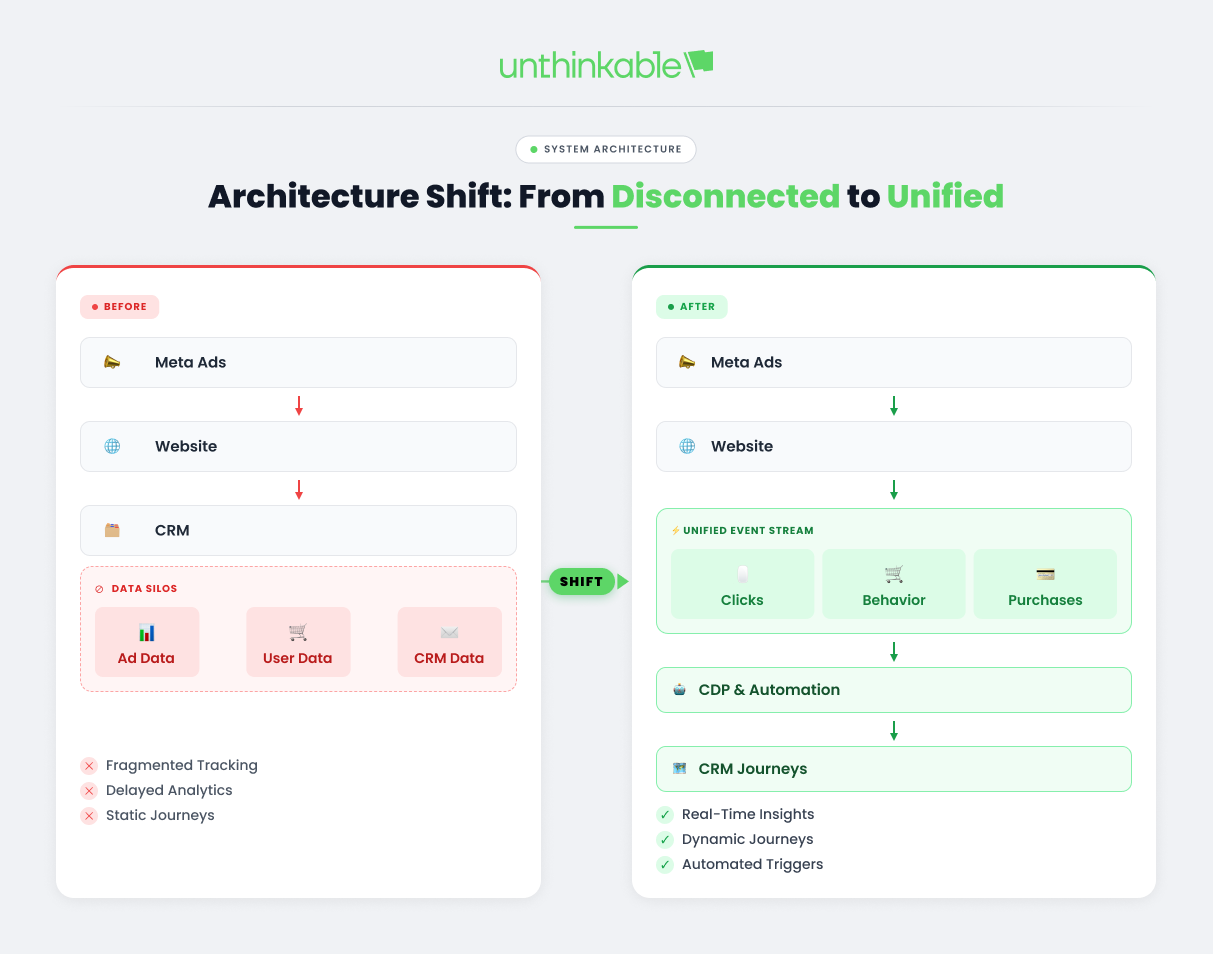

We anchored ourselves around two systems: the acquisition funnel (Meta campaigns → website → checkout) and the retention layer (CRM journeys via their analytics and automation tooling).

We expected the usual things. Campaign inefficiencies. Poor targeting. Weak creatives.

What we found was different.

Attribution didn’t match reality — the data coming out of the funnel wasn’t telling the story the team thought it was telling. Drop-offs were invisible — there was no way to see where users were falling out and why. CRM journeys were static — the same sequences running for every user regardless of where they came in or what they’d done. And every change to any of this required a sprint.

The system was executing. It just wasn’t learning from what it executed.

So instead of fixing the campaigns, we redesigned how the system behaved.

The shift isn’t about adding tools. It’s about connecting what you already have into a system that learns.

What came out of that engagement — and out of several others like it since — is a pattern. Architecture-led organizations share seven properties. They don’t arrive at them all at once. But the best ones build toward all seven, deliberately.

Here’s what they look like — and what their absence looks like in practice.

1. Modularity — Isolation Enables Parallel Progress

Before we touched this brand’s CRM, we had to understand why a “simple” segmentation change had broken campaign triggers three months earlier.

The answer: the CRM logic, the funnel tracking, and the backend were tangled together. Changing one thing meant predicting the impact on everything else. Nobody had a full map. So changes were either avoided or managed with extraordinary caution — which meant they were slow.

Modularity is the property that makes change local.

When event tracking, segmentation logic, and journey triggers are isolated from each other — each with its own boundary and its own owner — a change in one doesn’t cascade into the others. Failures don’t propagate. New experiments don’t require a safety audit of the whole system.

The practical outcome isn’t just cleaner architecture. It’s that two things can move at the same time, independently, without negotiating with each other.

For this brand, decoupling the CRM journeys from the backend logic was the first intervention. Before we built anything new, we needed to make sure that what we built wouldn’t be entangled with what we weren’t touching.

2. Composability — Features Become Configuration, Not Code

Before the rebuild, launching a new offer required a backend change and a deployment.

That’s not an exaggeration. A marketing team wanting to test a “buy 2, get 1 free” mechanic on a specific SKU category during a weekend window needed to raise a ticket, wait for a sprint slot, and then wait for deployment. By the time it went live, the weekend was sometimes gone. Or the team had given up on testing it at all.

Composability means the system is built so that business behaviour can be configured rather than coded.

This is the shift from “how long to build?” to “how do we configure?” The underlying capability exists. The parameters that control it are exposed to the people who need to change them — without requiring an engineer to change code and push a release.

For this brand, we moved offer logic, journey triggers, and audience conditions into a configuration layer. Once that was in place, the marketing team could launch a new offer over lunch, monitor it by evening, and iterate before the week was out.

The experiments didn’t get faster because the engineers got faster. They got faster because the experiments stopped depending on engineers.

3. Observability — You Can’t Fix What You Can’t See

Here’s what we found when we looked at the CRM layer on this engagement.

Journeys were running. The automation tool was active. Sequences were configured. On paper, the retention system existed.

But when we traced the event data feeding those journeys, the picture changed. Events were firing inconsistently. User properties were being recorded with mismatched values. Some triggers were referencing attributes that weren’t being populated at all. The journeys weren’t broken in an obvious way — no errors, no alerts — but the data flowing into them was corrupted at the source. The system was sending messages, just not to the right people, at the right time, based on the right behaviour.

The result: CRM was generating near-zero attributable repeat revenue. Not because the team hadn’t built anything. Because what they’d built was running blind.

Observability means the system tells you what’s happening as it happens — and at the level of granularity where the truth actually lives.

Before we rebuilt a single journey, we sanitised the data layer. Every meaningful event — product views, add-to-cart, purchase, return visit, discount usage — was re-instrumented and validated. Only once the signal was clean did we start rebuilding the journeys on top of it.

We’re now working through seven journeys with a specific goal: 15–20% of revenue coming from repeat customers. That number isn’t arbitrary. It’s what the unit economics of this business require to make acquisition spend sustainable.

We couldn’t have set that target — or built toward it — without first being able to see clearly. The journeys aren’t the hard part. The visibility is.

4. Reversibility — Make Decisions That Are Easy to Undo

The reason experiments were rare wasn’t just that they were slow to launch. It was that they were risky to launch.

A broken CRM journey — one that fired the wrong trigger or sent the wrong message to the wrong segment — could sit undetected for days, quietly burning through an audience. Rolling it back required identifying the problem, raising it, fixing it, testing the fix, and deploying it. Hours, sometimes longer.

When rollback is painful, teams stop experimenting. Not because they lack ideas. Because the cost of being wrong is too high.

Reversibility is the property that makes being wrong cheap.

Feature flags, controlled rollouts, A/B testing layers, and fallback mechanisms all serve the same purpose: they make it possible to move forward without making irreversible commitments. You can launch to 10% of your audience before you launch to all of them. You can revert in minutes rather than hours.

For this brand, we introduced controlled rollouts on journey changes and offer mechanics. A new journey variant could be tested on a small cohort, monitored with the event tracking we’d built, and either expanded or rolled back based on what the data showed — all without a deployment.

The experiments got faster. But more importantly, the team’s willingness to experiment went up. When being wrong is cheap, you try more things.

5. Team Autonomy — Architecture Determines Org Structure

Conway’s Law is usually discussed in the context of software design. But it works in both directions.

If your architecture is tightly coupled, your teams will be tightly coupled too. Marketing will depend on engineering for execution. Engineering will become a bottleneck not because they’re slow, but because every domain runs through them.

Before this engagement, minor journey changes required sprint cycles. Not because they were technically complex — many weren’t — but because they touched systems that engineering owned, and engineering had a queue.

Team autonomy means that the people closest to a decision can execute on it without waiting for someone else’s bandwidth.

This requires architectural boundaries that match organisational ones. When CRM logic is isolated and configurable, the CRM team owns it — fully, without needing to coordinate a deployment with engineering. When offer logic is in a configuration layer, marketing can manage it directly. Engineering is freed to work on the things that actually require engineering.

The outcome for this brand wasn’t just faster marketing cycles. It was a healthier working relationship between the teams. Engineering stopped being the bottleneck everyone resented. Marketing stopped being the requester who never got what they needed fast enough. Both teams started operating at their actual capacity.

6. Economic Alignment — Cost Awareness Built Into the System

Campaigns were scaling. Spend was increasing. But nobody had a clear view of which segments were converting at what cost, or whether the acquisition economics made sense at a unit level.

This is more common than it should be. Growth teams often have visibility into top-line spend and top-line revenue. What they don’t have is a clear line connecting a specific campaign, to a specific acquisition cost, to a specific conversion rate, to a specific customer lifetime value.

Without that line, budget allocation is opinion. And opinions tend to follow whoever argues loudest, not whoever’s numbers are most accurate.

Economic alignment means the system makes cost and outcome visible at the level where decisions actually get made — not just in end-of-month reports, but in the tooling the team uses day to day.

We connected the event tracking layer to spend data, which meant the team could see — in near real-time — which campaigns were bringing in customers who converted, retained, and spent more over time, and which were bringing in customers who bought once and disappeared.

The budget allocation shifted. Not dramatically, not overnight. But it shifted toward what was actually working, because for the first time, what was actually working was visible.

7. Intelligence by Design — Systems That Generate Insight, Not Just Data

The last pillar is the one most teams think they already have. They have dashboards. They have reports. They have analytics.

But most of that is reactive. Someone notices a number has moved, exports data, runs an analysis, and arrives at an answer — three days after the thing that caused the move has already played out.

Intelligence by design means the system surfaces what matters before someone thinks to ask.

For this brand, the corrupted data problem wasn’t just a CRM problem. It was an intelligence problem. The system had no reliable way to answer the most basic questions: Which customers are likely to come back? Which campaigns brought in customers who repeated? Which journeys actually influenced behaviour versus which ones just fired into the void?

Once the event layer was clean, those questions became answerable. The team could see which acquisition cohorts were showing repeat signals and which weren’t. They could see which journey touchpoints were influencing purchase behaviour and which were being ignored.

The 15–20% repeat revenue target we’re building toward isn’t a guess. It’s based on what the clean data is already showing about the cohorts that do repeat — and what it would take to replicate that behaviour at scale.

That’s what intelligence by design means. Not more dashboards. A system that generates the right signal, so the right decisions become obvious rather than argued over.

The Pattern, Summarised

These seven properties aren’t independent. They connect:

- Modularity enables Autonomy — isolated boundaries mean isolated ownership

- Observability enables Reversibility — you can only safely roll back if you can see the impact

- Composability enables Speed — configuration moves faster than deployment

- Intelligence enables Decisions — insight before impact, not after

Together, they describe a system that is scalable, adaptable, and measurable. Not in theory. In the specific, operational sense of: the team can move without asking permission, can see what’s happening as it happens, can experiment without fear, and can make budget decisions based on data rather than intuition.

A Self-Assessment

Most teams are somewhere in the middle — not fully broken, not fully architecture-led. The honest question is where you are.

Ask yourself:

- Can I trace a user’s journey — from the ad they saw to the email they received — in real time?

- Can marketing launch a new offer or journey without raising a ticket?

- When something breaks or underperforms, do I know within hours, or within days?

- Can I safely run a CRM experiment on 10% of my audience and expand if it works?

- Does each team have genuine ownership of their domain, or does everything route through engineering?

- Do I understand which campaigns are bringing in customers worth acquiring, not just customers?

- Does my system tell me what’s going wrong before the numbers reflect it?

If the honest answer to most of those is no — you’re running a system that executes but doesn’t learn. That’s not a failure of your team. It’s a design constraint.

If it’s mixed — you’re in a common and recoverable position. You have pieces of the picture. The work is connecting them.

If it’s mostly yes — you’re operating with architectural leverage. The question is which pillar to deepen next.

The Closing Thought

Most organisations try to solve growth problems by adding: more campaigns, more tools, more headcount.

Architecture-led organisations do something different. They remove the constraints that make adding things necessary in the first place.

The D2C brand we worked with didn’t need a bigger marketing team. They needed a system that allowed the marketing team to move at the speed their ideas required.

That’s what these seven pillars build toward.

Not a better-looking system. A system that learns.

Growth doesn’t stall because teams can’t execute. It stalls because systems can’t adapt.

What’s your score?

Go through the seven questions above and count your honest “yes” answers. Then tell me in the comments where the gaps are.

The patterns across answers are almost more revealing than the individual scores. And if mapping your current architecture against these pillars sounds like a useful conversation, it’s one we have regularly with founders and CTOs. Usually takes 30 minutes. Almost always clarifying.